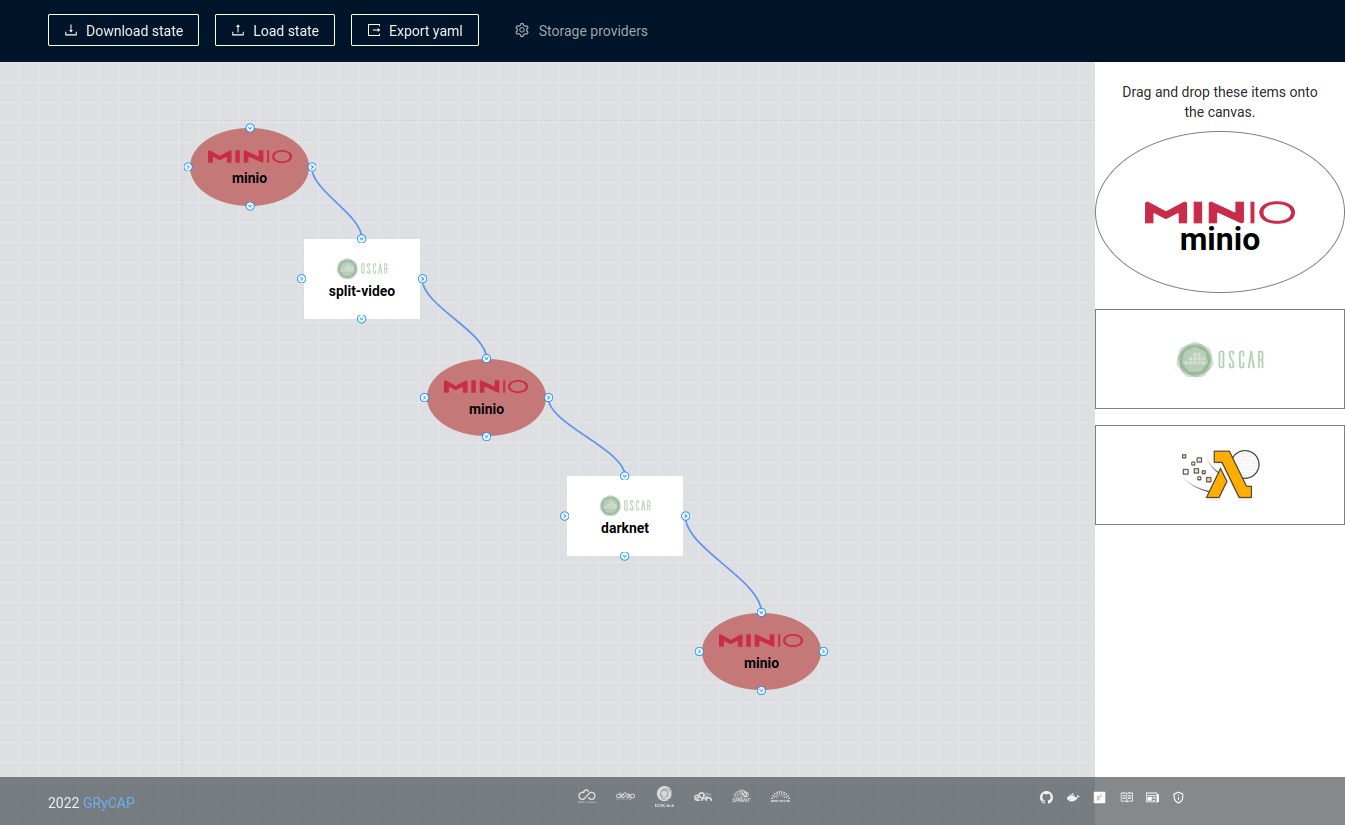

FDL-Composer is a tool to visually design workflows for OSCAR and SCAR. We are going to simulate the example video-process

This example supports highly-scalable event-driven video analysis using ffmpeg to extract keyframes of the video and Darknet to perform object recognition on the keyframes. It requires two OSCAR services: one where the video is going to get split into frames, and another to process those frames. It also requires three MinIO buckets. One for the input. One for the output. And the last one for the connection between both services. So when a video is uploaded to the input bucket, the “split video” OSCAR service will be triggered and let the frames in an intermediate bucket. That will trigger the “processing frame” OSCAR service. Furthermore, the result will be stored in the last bucket.

Drag the OSCAR functions we are going to use. In this case, two.

Double click in the node. Fill in the different input fields.

| Split Video | Object Detection |

|---|---|

|

|

Create a new MinIO storage.

Drag into the canvas three MinIo buckets.

Connect all the components and make a workflow.

Put the name in the different buckets.

| Input Bucket | Medium Bucket | Output Bucket |

|---|---|---|

|

|

|

Export YAML

Here is the result:

functions:

oscar:

- oscar-cluster:

name: split-video

memory: 1Gi

cpu: '1'

image: grycap/ffmpeg

script: split-video.sh

input:

- path: video-process/in

storage_provider: minio.minio

output:

- path: video-process/med

storage_provider: minio.minio

- oscar-cluster:

name: darknet

memory: 1Gi

cpu: '1'

image: grycap/darknet-v3

script: yolov3-object-detection.sh

input:

- path: video-process/med

storage_provider: minio.minio

output:

- path: video-process/out

storage_provider: minio.minio

storage_providers:

minio:

minio:

endpoint: 'http://localhost:443'

region: us-east-1

access_key: minio

secret_key: miniopassword

verify: false

This FDL file can be used as input to OSCAR in order to deploy the services within a specific OSCAR cluster.

FDL Composer, OSCAR and SCAR are developed by the GRyCAP research group at the Universitat Politècnica de València.